Track Experiment Results in CI

Instead of deploying blindly and hoping for the best, you can validate changes with real data before they reach production. Create experiments that automatically run your agent flow in CI, test your changes against production-quality datasets, and get comprehensive evaluation results directly in your pull request. This ensures every change is validated with the same rigor as your application code.How It Works

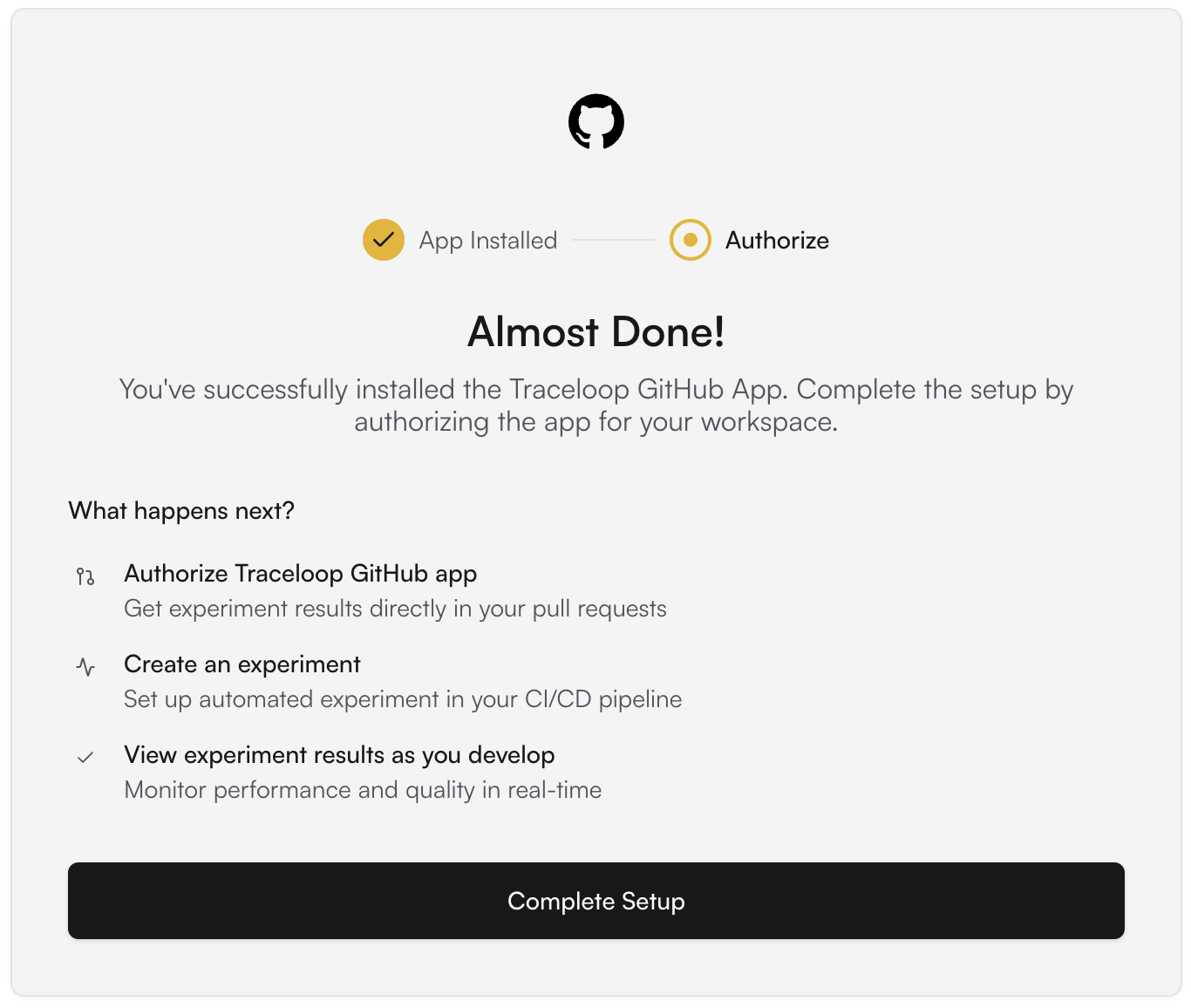

Run an experiment in your CI/CD pipeline with the Traceloop GitHub App integration. Receive experiment evaluation results as comments on your pull requests, helping you validate AI model changes, prompt updates, and configuration modifications before merging to production.Install the Traceloop GitHub App

Go to the integrations page within Traceloop and click on the GitHub card.Click “Install GitHub App” to be redirected to GitHub where you can install the Traceloop app for your organization or personal account.

You can also install Traceloop GitHub app here

Configure Repository Access

Select the repositories where you want to enable Traceloop experiment runs. You can choose:

- All repositories in your organization

- Specific repositories only

Permissions Required: The app needs read access to your repository contents and write access to pull requests to post evaluation results as comments.

Create Your Experiment Script

Create an experiment script that runs your AI flow. An experiment consists of three key components:

- Dataset: A collection of test inputs that represent real-world scenarios your AI will handle

- Task Function: Your AI flow code that processes each dataset row (e.g., calling your LLM, running RAG, executing agent logic)

- Evaluators: Automated quality checks that measure your AI’s performance (e.g., accuracy, safety, relevance)

Set up Your CI Workflow

Add a GitHub Actions workflow to automatically run Traceloop experiments on pull requests.

Below is an example workflow file you can customize for your project:

ci-cd configuration

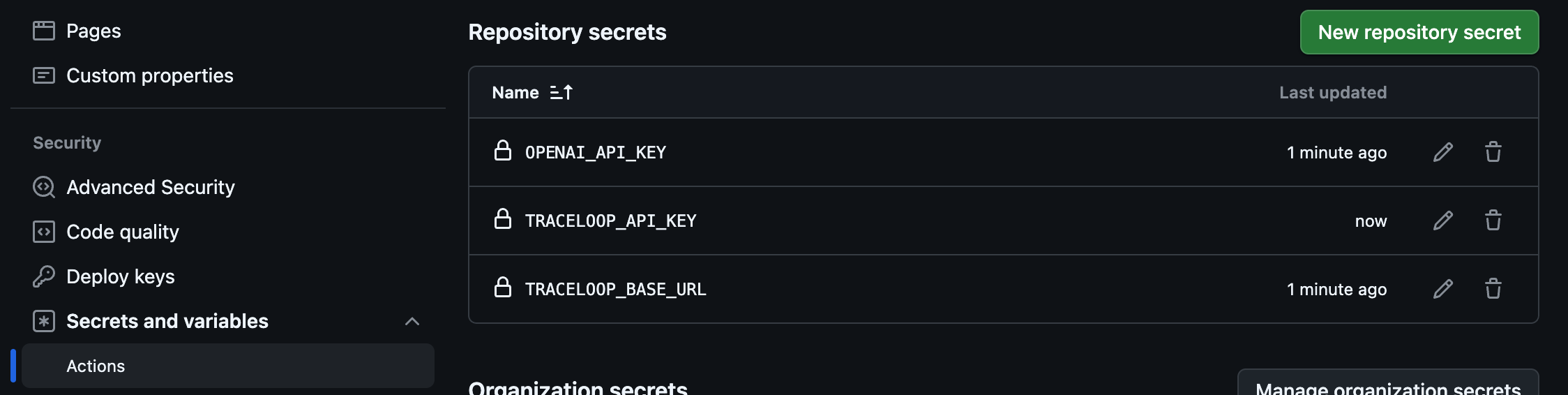

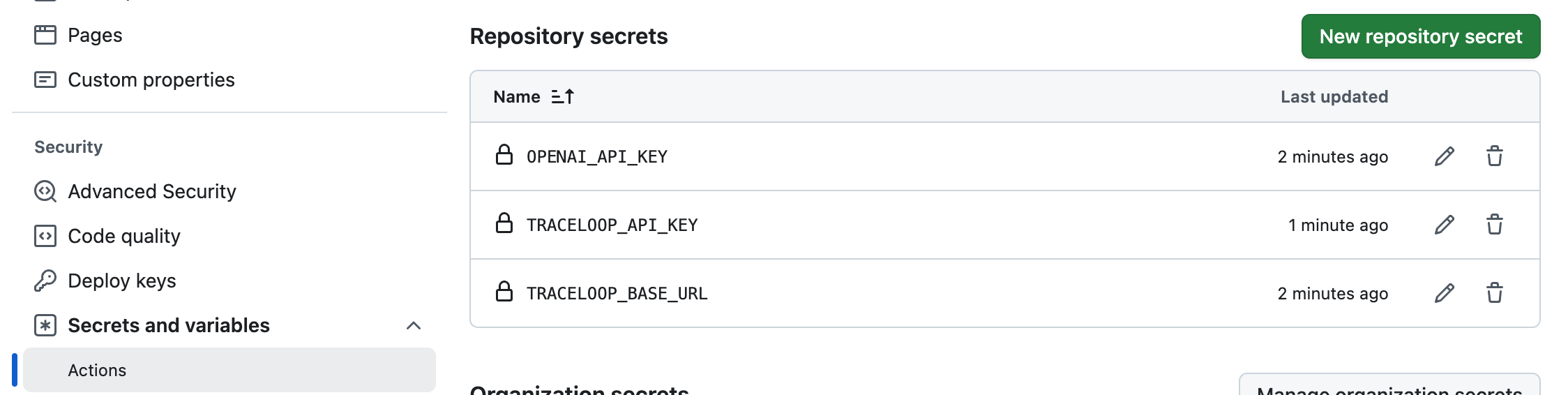

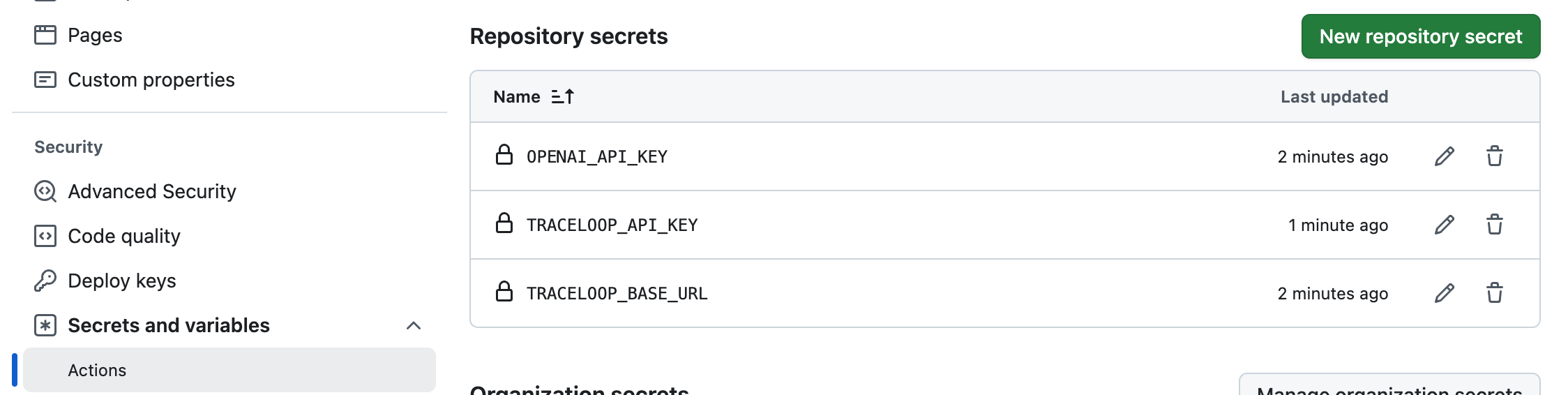

Add secrets to your GitHub repositoryMake sure all secrets used in your experiment script (like

OPENAI_API_KEY) are added to both:- Your GitHub Actions workflow configuration

- Your GitHub repository secrets

TRACELOOP_API_KEY to your GitHub repository secrets. Generate one in Settings →

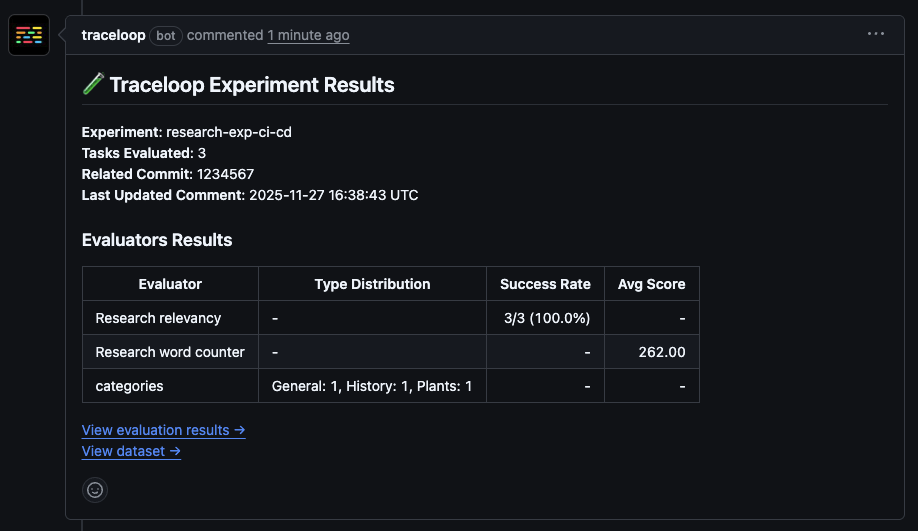

View Results in Your Pull Request

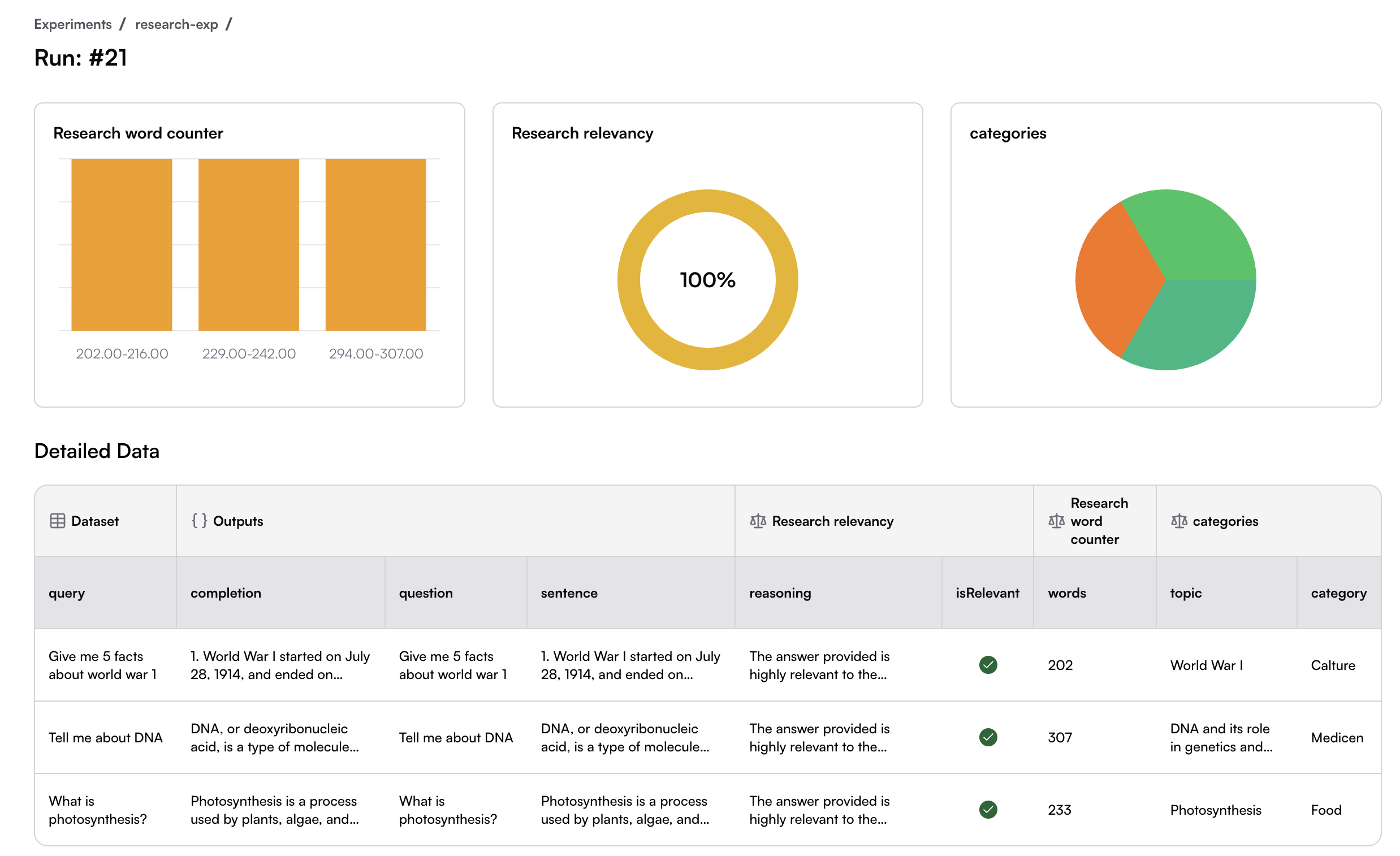

Once configured, every pull request will automatically trigger the experiment run. The Traceloop GitHub App will post a comment on the PR with a comprehensive summary of the evaluation results.

- Overall experiment status

- Evaluation metrics

- Link to detailed results

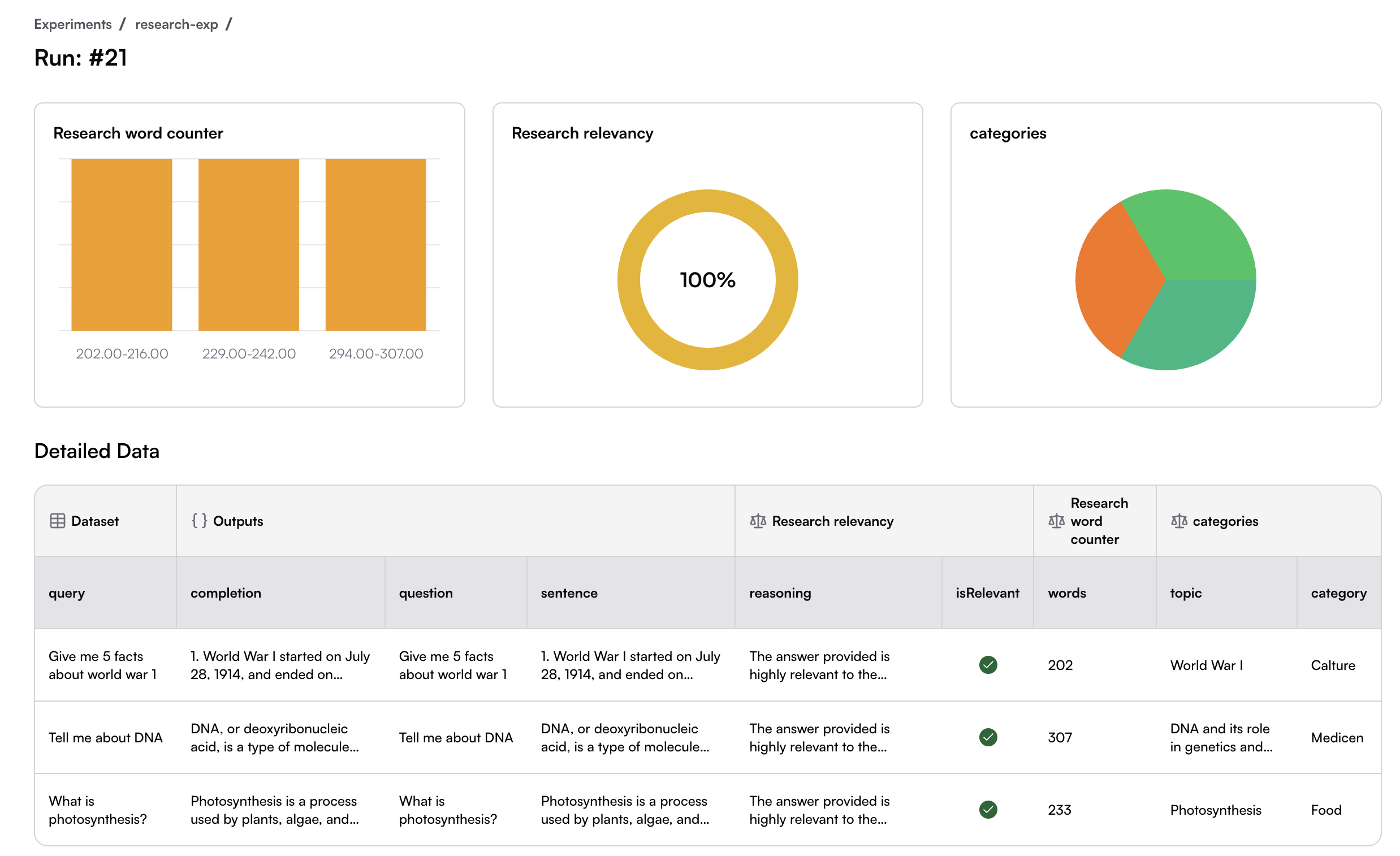

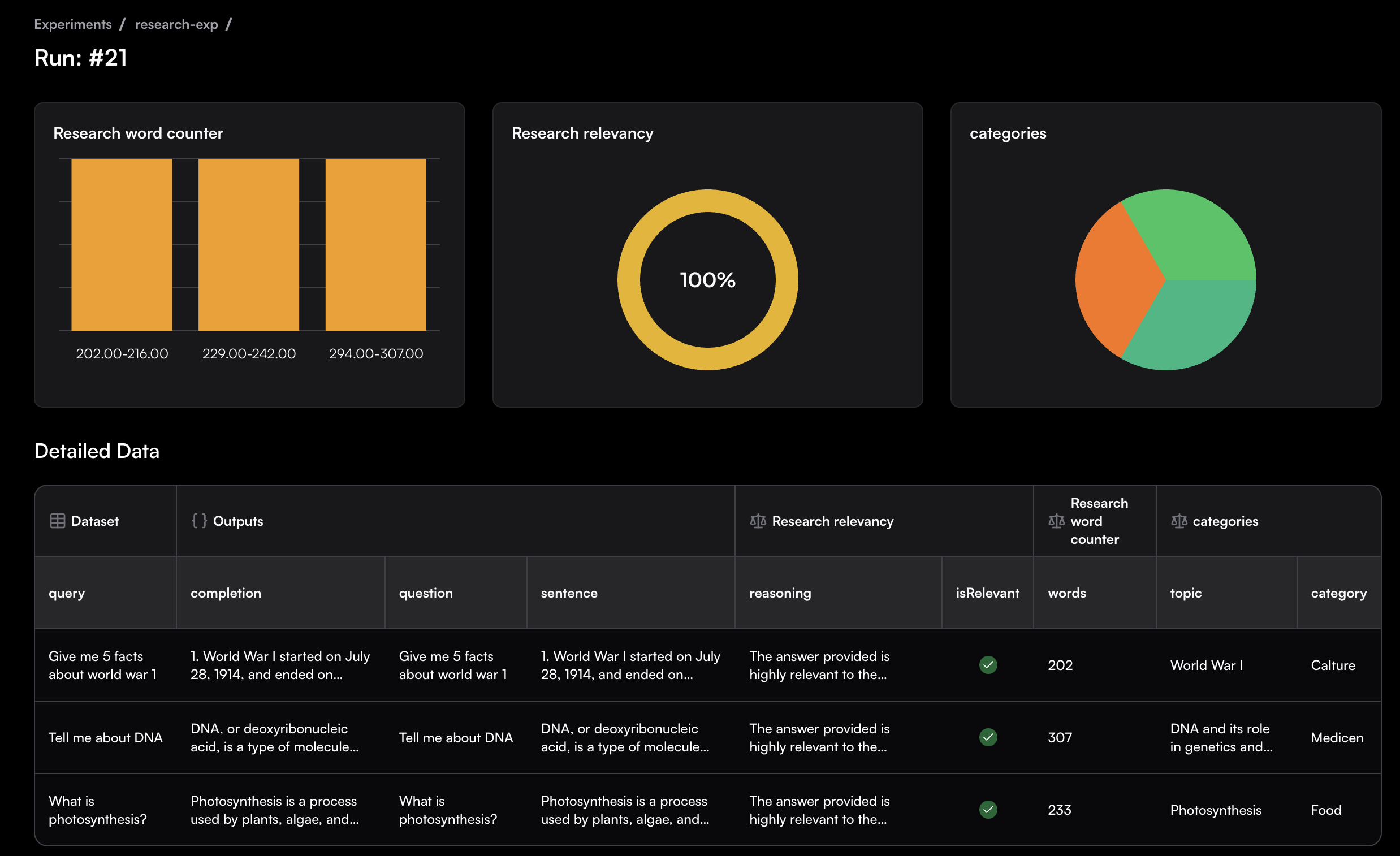

Experiment Dashboard

Click on the link in the PR comment to view the complete experiment run in the Traceloop experiment dashboard, where you can:- Review individual test cases and their evaluator scores

- Analyze which specific inputs passed or failed

- Compare results with previous runs to track improvements or regressions

- Drill down into evaluator reasoning and feedback